- Home

- Popular IT Certifications

- TCP and UDP Operations Made Easy

Explain TCP and UDP operations

The TCP and UDP are the most major protocols which are operating at the transport layer. Both the protocols will operate in a different manner and it will be selected based on the requirements only. TCP stands for the transmission control protocol, which guarantees the data packet delivery. And UDP stands for the User datagram protocol which operates in the datagram mode. TCP is the connections oriented protocol, whereas the UDP is the connection less protocol. Here, you can learn the TCP and UDP operations in the following sections:

1.4 Explain TCP operations

The TCP is referred as the reliable protocol, which is responsible for breaking up the messages into the TCP segments as well as resembling it in a receiving side. The major purpose of the TCP is to give the reliable and secure logical connection, service or circuit between the pairs of the processes. To offer this type of service on top of the less reliable internet communication system needs facilities in areas such as security, precedence, multiplexing, reliability, connections and basic data transfer. The main purpose of the TCP is flow control and error recovery. As it is connection based protocol, which means that before allowing any data it accomplishes connections and also terminates it upon completion.

During the connection, accomplishment both server and client agree upon the sequence and also acknowledge numbers. The implicit client notifies the server of its source ports. The sequence is the characteristic of the TCP data segment. This sequence begins with the random number and each time the new packet is sent, then the sequence is incremented by a number of bytes sent in the previous segment of the TCP. Acknowledge segment is moreover the same, but from a receiver side. This does not comprise data and are equal to the sender's sequence numbers increased by the number of the received bytes. The ACK segment acknowledges that the host has got the sent data.

TCP is the connection oriented protocol, that means the devices must open the connection before transferring data and must lose a connection gracefully after transferring the data. It also assures the reliable data delivery to the destinations. This protocol offers the extensive error checking mechanisms, including the acknowledge of data and flow control as mentioned above. The TCP is relatively slow because of the extensive error checking mechanisms only. Demultiplexing as well as multiplexing is greatly possible in the TCP by means of the TCP port numbers and also retransmission of the lost packets is merely possible in the TCP.

1.4.a IPv4 and IPv6 (P) MTU

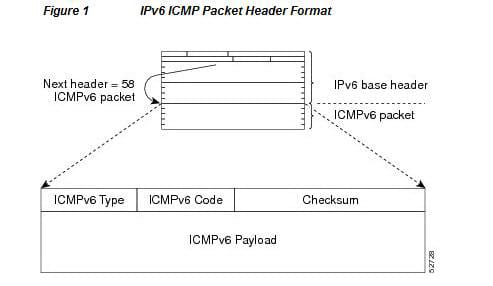

The larger Maximum transmission unit - MTU will bring greater efficiency. This MTU is the needed concept in the packet switching systems. The Path MTU equals to the smallest link MTU on the path from the source to destination. Let us come to the Path MTU that relies on the TCP to probe an internet path with the progressively larger packets. It is the most efficient one when used in the conjunction with an ICMP based path MTU mechanism as indicated in the RFC 1191 and RFC 1981, but it resolves many robustness problems of the techniques which are classic, since it will never depend on the ICMP message delivery.

The internet protocol version 6 is also known as the IP next generation. It was specially proposed by the IETTF as the successor to the internet protocol version 4. The most significant difference between version 4 and 6 is the version 6 increases an IP address size from the 32 bits - 128 bits.

The links that the packet passes through the source to the destination has a variety of different MTU. In the IPv6, when the packet size exceeds the MTU link, then the packet can be fragmented at a source so as to deduce the forwarding device processing pressure and also utilize the network resource rationally. The PMTU mechanism is to identify the minimum MTU on the source to destination path.

1.4.b MSS

The MSS is defined as the maximum segment size. It is the parameter of the TCP protocol which specifies the largest data amount. The default TCP MSS is 536. Each of the TCP device has associated with it the ceiling on TCP size. The segment size that does not exceed regardless of how large the current window was. This is called as the maximum segment size. To decide how much data to put into the segment, every device in the TCP connections will choose the quantity based on the current size of the window, in conjunction with a various algorithm, but it does not as so large that the quantity of data exceeds the maximum segment size of the device to which it was sent.

It is the largest quantity of data that a communication or computer device can handle in the single, unfragmented piece. For the optimum communications, then the number of bytes in a data segment as well as the header must include less than the number of the bytes in an MTU. This MSS is the most essential consideration in the internet connections, especially in web browsing. When an internet TCP is used to gain the internet connection, then the computers which are connected must agree on and set, the maximum transmission unit size acceptable to both. The typical MTU size in the TCP for the home computer, internet connections are either 1500 or 576 bytes. The headers are mostly 40 bytes long and the MSS is equal to a difference, either 1460 or 536 bytes. In some cases, the MTU size is less than the 576 bytes and data segments has smaller than the 536 bytes. As the data is routed over the internet, it has to pass via multiple gateway routers. Most ideally, each data segment may pass via each router without getting fragmented. Suppose, the data segment size is relatively too large for any routers via which the data passes, then the oversize segment are fragmented. It will slow down the speed of the connection as viewed by the computer operator. In some instance this slowdown is really dramatic. The likelihood of the such kind of fragmentation may be minimized by maintaining the MSS as small as much as possible. For most of the computer operators, the MSS will set automatically by an operating system.

1.4.c Latency

The speed of the each data transfer like the TCP is about the use largely determined by a line speed. The delay is considered as round trip time- RTT of the each data packet. Regardless of the speed of a processor or software efficiency, it takes the finite amount of the time to manipulate and also present the data. Whether an application is the web page showing the live camera or latest news shot showing the traffic jam, there are so many methods in which the application can be affected by the latency. There are 4 key causes of the latency are: data protocols, propagation delay, serialization, switching a routing, buffing and queuing. Any time the client computer asks the server a, there is an RTT delay until that receives the response. The data packet has to travel through the number of high traffic router and also there was always a speed of light as the limitation, considering a huge distance of the internet communication.

1.4.d Windowing

The throughput of the communication is limited by the 2 windows such as congestion window and receive window. Each of the TCP segments comprises the current value of a receive window. The TCP windowing concept is mainly used to avoid the congestion in the traffic. It also controls the quantity of the unacknowledged data that a sender may send before it get an acknowledgement back from a receiver which it has received it.

1.4.e Bandwidth-delay product

The Bandwidth delay product - BDP determines the quantity of the data which can be transmitted in a network. It is the most important concept in the window based protocol like TCP, as the throughput is bound by a BDP. The TCP receive window and BDP limit the connection to the products of the latency as well as the bandwidth. The transmission will not exceed a RWIN/ latency value. The amount of the data to send prior that should reasonably expect an acknowledgement.

1.4.f Global synchronization

The TCP global synchronization in the computer networks will happen to the TCP flows during the period of congestions because every sender will deduce their transmission rates at a same time when packet loss occur. All the TCP streams will behave in the same way, so it will become as synchronized eventually, increasing to cause the congestion as well as backing off at the roughly same rates. It causes the most familiar bandwidth utilization graphs called the saw tooth. The WRED and RED will assist to alleviate it.

1.5 Describe UDP operations

The user datagram protocol - UDP is the datagram oriented protocol without overhead for opening the connection with the help of 3 way handshake, closing the connection and maintaining the connection. This UDP is very efficient for the multicast or broadcast type of the network transmission. It has only the error checking mechanism with the help of checksums. There are no sequencing of the data in the UDP and the delivery of the data cannot be guaranteed in that. It is simpler, more efficient and faster than the TCP. Although, UDP is less robust than the TCP. Here demultiplexing and multiplexing are possible in the UDP by means of the UDP port numbers. Additionally, there is no transmission of the lost packets in the UDP.

As it is a connectionless protocol, it is not at all reliable protocols when compared to the TCP. It is capable to perform the fundamental error checking too. It will never offer any sequencing of the data. Hence, the data will arrive at the destination device in the various orders from which it is sent. This will occur in the large networks like the internet, where datagrams takes various paths to a destination and also experience the delay in the different router. The UDP is generally the IP with the transport layer port addressing. Sometimes this UDP is also known as the wrapper protocol.

The last 16 digits are reserved for a checksum value in the UDP header. This checksum is used as the error detection tool. The checksum field also includes the 12 bytes pseudo header which includes the destination and source IP addresses. This pseudo header is the most useful one to check the IP datagram arrived at the station.

1.5.a Starvation

The TCP starvation or UDP dominance is experienced at the congestions time where the TCP and UDP streams are assigned to a same class. Because the UDP has no flow control which cause it to back off while congestion taking place, but TCP does, this TCP ends up backing off and also allowing even many bandwidth to the UDP streams to a point where the UDP takes it over completely. It is not assisted by WRED as drops caused by the WRED will not affect the UDP streams. The best possible way to resolve the issue is to classify the TCP and UDP streams separately in the possible way.

1.5.b Latency

The latency is the end to end delay. As mentioned above, the UDP is connectionless, the real effect of the latency on the UDP stream is that there would be a great delay in between the sender and the receiver. The jitter is the variance in the latency. It causes problems with the UDP stream. The Jiffer can be smoothed by buffering.

From the above session, it is possible to learn the TCP and UDP operations in details. In that it is essential to learn more about the difference between those 2 operations too. The connection and connectionless protocols are used in a variety of things depends upon the usage and requirements of the things. This thorough explanation will help to understand the operations as well MSS, latency, global synchronization, bandwidth-delay product, windowing, and IPv4 and IPv6 P MTU under TCP and latency and starvation under the UDP operations.

Site Search: